Imagine recommendation systems that ditch the one-size-fits-all approach. Instead, picture conversing with a super-smart friend who knows your tastes and can suggest things you’ll enjoy. This is the future with Large Language Models (LLMs) in recommendation systems!

LLMs are like super-powered language tools. Trained on massive amounts of text, they can understand the nuances of human conversation. This lets them analyze your past choices, reviews you’ve written, and even comments you’ve left — like getting to know you through a chat. LLMs can use this knowledge to recommend things that fit your specific interests and mood.

Motivation

The explosion of recommendation systems happened as online services grew, helping users handle information overload and find better quality content. These systems aim to figure out what users like and suggest items they might enjoy. Deep learning-based recommendation systems focus on this by ranking items for users, whether it’s offering top picks or recommending things in a sequence.

On the other hand, large language models (LLMs) are super bright in understanding language, doing things like reasoning and learning from just a few examples. They also have loads of knowledge packed into their systems. So, the question is: How can we use these LLMs to make recommendation systems even better?

Let’s break down the blog into four parts:

- Where to Use LLMs: We’ll explore where it’s wise to bring them into recommendation systems. Sometimes, more straightforward solutions work better, so we’ll find the right balance.

- Integrating LLMs: Here, we’ll talk about how to mix LLMs into existing recommendation setups. You can adjust LLMs to your data or keep them as is. Plus, we’ll discuss combining traditional recommendation methods with LLMs.

- Challenges: We’ll tackle the hurdles that come up in the industry when using these new methods.

- Future Improvements: Finally, we’ll discuss how to make LLMs even more helpful in recommendation systems.

Where to adapt LLMs?

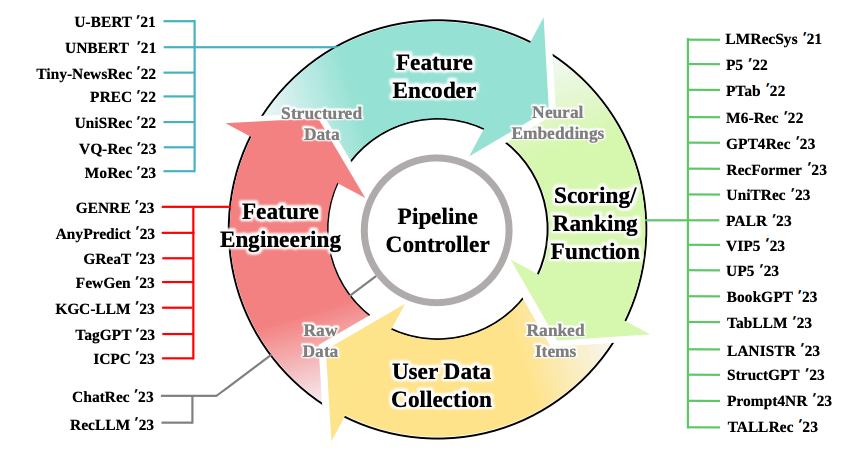

Large Language Models (LLMs), like the ones we’ve been discussing, have a lot of potential. But where can we use them? Imagine a pipeline that helps recommend things to users, like products or content. Here’s how it works:

- User Data Collection: We gather information from users. This can be explicit (like ratings) or implicit (like clicks on a website).

- Feature Engineering: We take the raw data we collected and turn it into something structured. Think of it like organizing a messy room into neat boxes.

- Feature Encoding: We create special codes (called embeddings) for the features. It’s like translating the data into a language the computer understands.

- Scoring/Ranking: We use machine learning to determine which items are most relevant to users. It’s like picking the best movie to watch from a long list.

- Pipeline Control: We manage the whole process. Imagine a traffic controller making sure everything runs smoothly.

Now, where do LLMs fit in? Well, they can help at different stages:

- Understanding User Input: LLMs can read and understand what users say, even if they type it naturally.

- Generating Better Features: LLMs can help create better features from messy data.

- Improving Scoring/Ranking: LLMs can learn from patterns and suggest better ways to rank items.

- Fine-Tuning the Pipeline: LLMs can help fine-tune the process for better results.

So, LLMs are like helpful assistants in this recommendation pipeline!

LLM for feature engineering

What LLM can do here is take the original data input and generate additional textual features as a way to augment the data. This approach was demonstrated to work well with tabular data and was further extended by using the LLMs to align out-of-domain datasets on the shared task. They can also be used to generate tags and model user interest.

LLM as feature encoder

For conventional recommendation systems, the data is usually transformed into one-hot encoding + an embedding layer is added to adopt dense embeddings. With LLMs, we can improve the feature encoding process by adding better representations for downstream models via the semantic information extracted and applied to the embeddings and achieving better cross-domain recommendations where feature pools might not be shared.

For example, UNBERT uses BERT to improve news recommendations via better feature encoding. ZESREC applies BERT to convert item descriptions into a zero-shot representation of a continuous form.

LLM for scoring/ranking

A common approach explored is using the LLM to rank the items based on relevance. Many methods use a pipeline where the output of LLM is fed into a projection layer to calculate the score on the regression or classification task. However, recently, some researchers proposed using the LLM instead to deliver the score directly. TALLRec, for example, uses LLM as a decoder to answer a binary question appended to the prompt; another team used LLM to predict a score textually and formatted it with careful prompting.

LLMs can also be successfully used for direct item generation. This would be similar to our approach in the previous blog post. These approaches also can be hybridized and used in tandem.

LLM as a pipeline controller

This approach largely stems from the notion that LLMs possess emergent properties, that is, they can perform tasks that smaller models could not; these can be in context learning and logical reasoning. However, you should be aware that many researchers actively dispute the claims that LLMs possess any emergent abilities and imply that these are instead a product of imperfect statistical methods that bias the evaluation, suggesting it may not be a fundamental property of scaling AI models.

Some researchers even suggested a complete framework that utilizes the LLM to manage the dialogue, understand user preferences, arrange the ranking stage, and simulate user interaction.

You may have noticed that the user data collection piece needs to be included. Not much work has been done to explore the LLM’s potential in this domain. LLMs here can filter biased, hateful data and select the best representations or find meaningful, relevant information from a sea of input. They can be used in surveys as customer experience collectors and many more.

How to adapt LLMs?

Now that we know where to include the models in our pipeline, let’s talk about how we can do so.

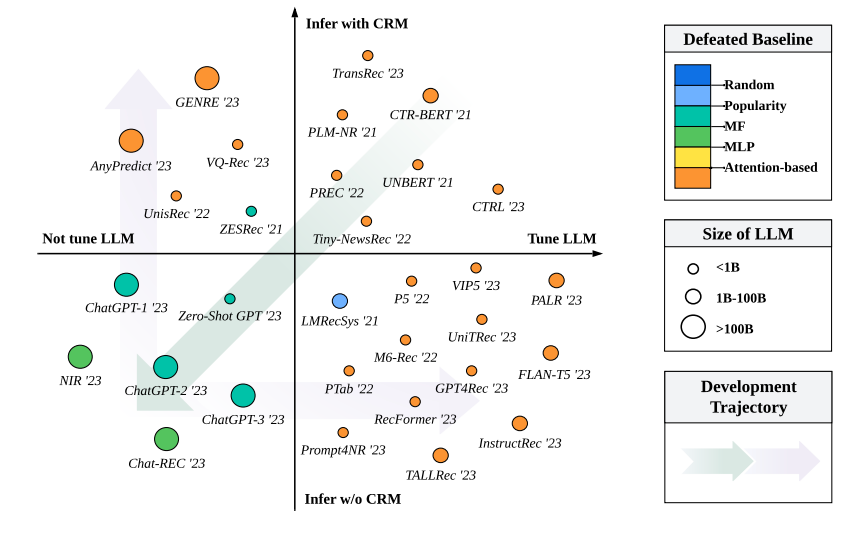

Generally, we can have 4 cases. From the diagram above, we can see how the current approaches are distributed given the choice of tuning and not tuning the LLM during the training phase (this includes using efficient methods like adapters and LoRA) and whether to use CRM as a recsys engine. By quadrants (in the figure models approaches by performance):

- Here, we can see the approaches utilizing LLM tuning and CRM inference. What is notable here is the model size. Methods here use LLMs as feature encoders for semantic representations; this allows for lightweight setup, but the critical abilities of larger models (reasoning, instruction following) remain unexplored.

- Inferring with CRM but not tuning the LLM utilizes different abilities of LLMs (reasoning, richer semantic information) and a layer of CRM to either prerank items before inserting information into LLM or as a layer for LLM output.

- These approaches investigate the zero-shot performance of LLMs, and here, they are all largely reliant on bigger LLM sizes, and the idea behind it is that larger models have better latent space. These approaches lag in performance and efficiency compared to lightweight CRM tuned on training data, indicating the importance of domain knowledge.

- These approaches are similar to 1 in that they use lighter model sizes. Still, they also go beyond the simple econder paradigm and use LLMs in greater capacity in the recsys pipeline.

Collaborative knowledge

From the diagram above, we can observe the performance difference between groups 3 and 1,2,4, indicating that while 3 had the largest model sizes, recommending items requires specialized domain knowledge. Therefore, finetuning indeed increases the performance. This means you can either tune the LLM during training and inject in-domain collaborative learning or inject this information via CRM from a model-centric perspective during inference.

Reranking hard samples

Although, as demonstrated, larger models with zero-shot performance do not work well in a recsys context. Researchers found that they are surprisingly good at reranking complex samples. They introduce the filter-then-rerank paradigm, which uses a pre-ranking function from conventional recommender systems to pre-filter more straightforward negative items (e.g., matching or pre-ranking stage in industrial applications) and creates a set of candidates with more difficult samples for LLM to rerank. This should improve LLM’s listwise reranking efficiency, particularly for APIs that resemble ChatGPT. This discovery is useful in industrial settings, where we may want LLM only to handle complex samples and leave other pieces to lightweight models to reduce computational expenses.

Does size matter?

It isn’t easy to tell. Given finetuning on the domain data, all large and small models offer comparatively good performance. As there is no unified recsys benchmark to measure the performance of larger and smaller models and their relative success, this question is still up for evaluation. Depending on your application and where in the pipeline you want to include the LLM, you should select different model sizes.

Challenges from the industry

But this is still all in an academic setting; serious, specific engineering challenges come from the industry I want to mention here. The first is training efficiency. Recall the articles about the training cost of the GPT-3 and 4, and you can already start to get a headache about the cost of repeated finetuning of your LLM for a recommendation data pool that is constantly shifting and expanding. So you have growing data volume + updated model frequency (day-level, hour-level, minute-level) + underlying LLM size = trouble. To address this parameter, efficient methods are recommended for finetuning.

The benefit of using larger model sizes is producing more generalized and reliable output via just a handful of supervisions. Researchers suggest adopting the long-short update strategy when we leverage LLM for feature engineering and feature encoder. This cuts down the training data volume and relaxes the update frequency for LLM (e.g., week-level) while maintaining complete training data and a high update frequency for CRM. This way, LLM can give the CRM aligned domain knowledge, and CRM can be used as a frequently updated adapter for the LLM.

The second important part is inference latency, something I have dealt with myself. Recommendation systems are usually real-time services and extremely time-sensitive applications, where all stages (matching, ranking, etc.) should be done in tens of milliseconds. LLMs famously possess large inference time, so this creates a problem. To address this, we recommend using caching and precomputing, which has proven effective; hence, you can cache dense embeddings produced by an LLM.

Another good strategy is reducing model size for final inference via techniques like distillation and quantization; this introduces a bit of a tradeoff between model size and performance, so a balance has to be found. In other ways, LLMs can be used for feature engineering, which does not bring the extra computation burden to the inference phase.

Third is the challenge of dealing with long-text inputs. We need to use prompts for LLMs often, and given that a lot of user data is collected, the general industry recsys requires a more extended user history. However, LLMs could perform better with significant textual inputs. This can be partially because the original training corpus contained shorter inputs, and the distribution of in-domain text is different from the original training data. Additionally, using larger text sizes can induce memory inefficiency and break the limits of context window causing LLM to forget information and produce inferior output.

In the same category, ID indexing should be mentioned. Recall that large amounts of data for recsys possess no semantic information. Here, approaches are divided into two camps. One completely abandons non-semantic IDs and instead focuses on building interfaces using natural language alone; this seems to improve cross-domain performance and generalization. However, others choose to potentially sacrifice these gains in favor of im domain performance by introducing new embedding methods that account for IDs like P5. Or cluster related IDs together, or attach semantic information to them.

The last but not least important considerations are fairness and bias. An underlying bias of LLMs is an active research area. Further finetuning and the foundation model choice have to account for bias in the data to make recommendations fair and appropriate. Careful design considerations need to be made to address impact of sensitive attributes (gender, race) and focus model on historical user data, possibly with filtering and designed prompting.

Conclusions and the Future

This post covered the landscape of current LLM approaches in recsys. This area of research is very fresh and no doubt in the coming years we will see new developments addressing challenges and potential mentioned in this blogpost. For takeaway, the two great directions for the future of LLM in recsys can be:

- A unified benchmark is of urgent importance and needed to provide convincing evaluation metrics to allow fine-grained comparison among existing approaches, and it is expensive to reproduce many experimental results of recsys with LLMs

- A custom large foundation model tailored for recommendation domains, which can take over the entire recommendation pipeline, enabling new levels of automation in recsys.